1. What is LocusGraph?

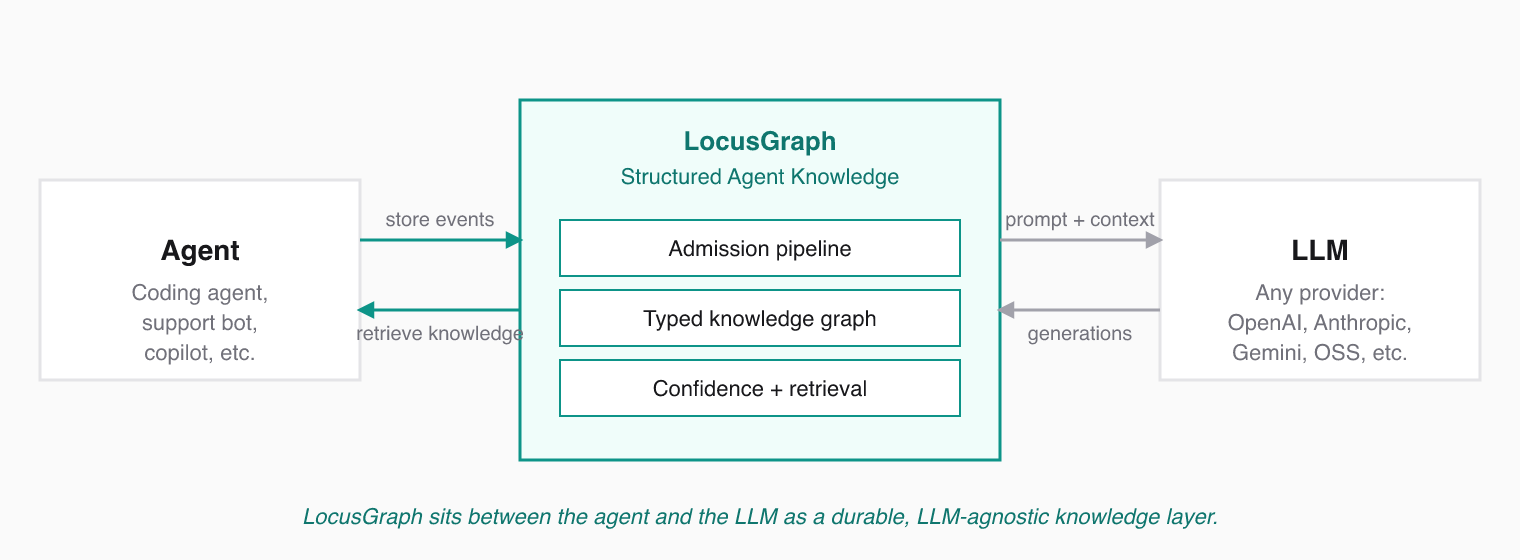

LocusGraph is a typed knowledge graph with an admission pipeline, confidence scoring, and semantic retrieval. It is designed to sit between an AI agent and its LLM, replacing dumb chat-history or vector-search memory with structured knowledge that compounds.

In One Line

LocusGraph turns what your agents learn into structured knowledge that compounds, so events become patterns, patterns become skills, and every session starts smarter.

What It Actually Does

Every useful event your agent produces — a fact, action, decision, observation, or feedback — enters LocusGraph and is admitted, typed, scoped, linked to related knowledge, and given a confidence score. Once admitted, that knowledge is permanent and retrievable across sessions.

LocusGraph exposes five operations:

- Store typed events from agents, users, systems, validators, and executors.

- Retrieve validated knowledge with semantic search and context filters.

- Connect knowledge through

reinforces,contradicts,extends, andrelated_tolinks. - Organize knowledge into contexts and isolated graph workspaces.

- Reason over accumulated knowledge to generate insights and recommendations.

What LocusGraph Is Not

| It is not... | Because... |

|---|---|

| A chat history buffer | Chat history is recall. LocusGraph stores understanding. |

| A plain vector store | Vector stores retrieve similar text. LocusGraph retrieves validated knowledge. |

| A new LLM provider | LocusGraph is LLM agnostic — it works behind any model. |

| Just "agent memory" | Memory means recall. Knowledge means understanding. LocusGraph delivers the latter. |

The category is Structured Agent Knowledge. "Structured" separates LocusGraph from dump-everything vector stores. "Agent" says exactly who it is for. "Knowledge" is a step above memory.