Managing Context Windows

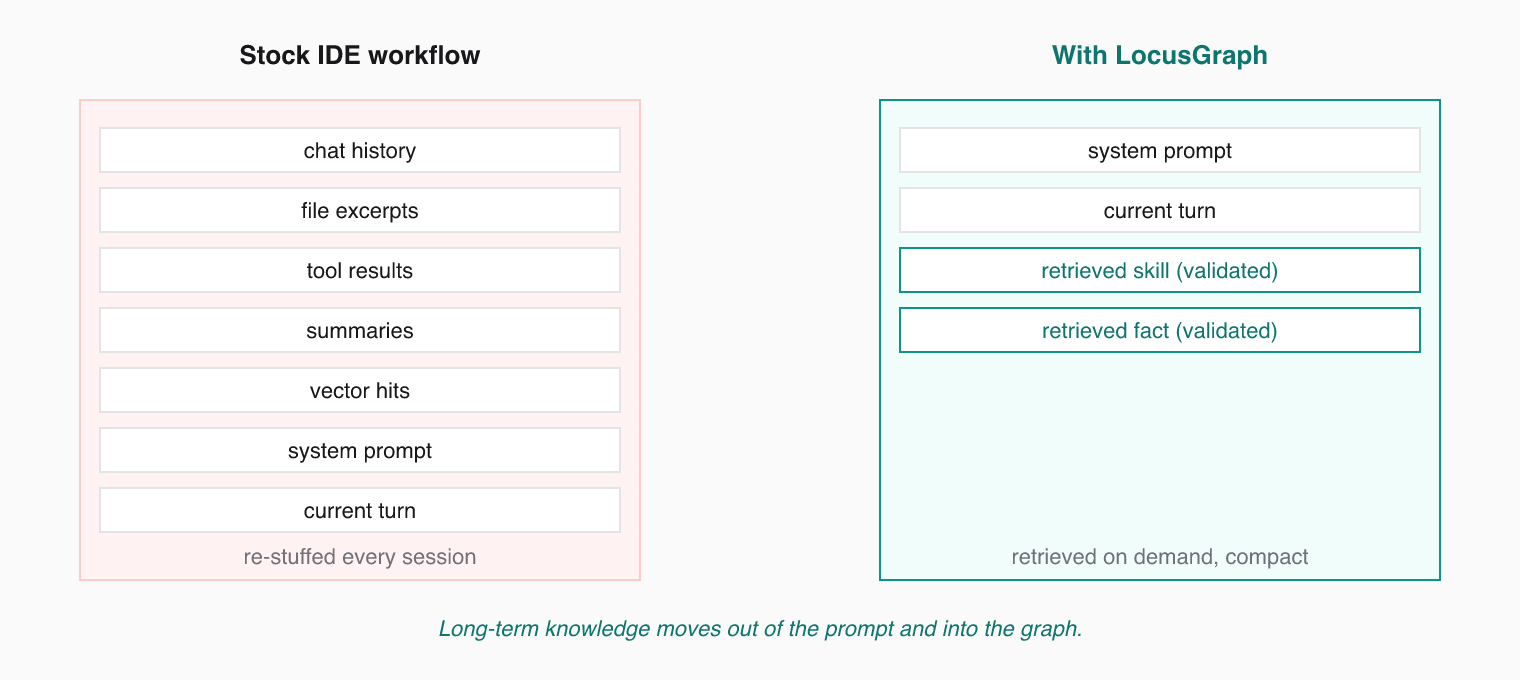

Agent context windows are finite. Every token counts. Structured Agent Knowledge keeps the right learnings in front of the model and the rest out of the prompt.

The Problem

Large language models have fixed context windows. Fill them with irrelevant text and the agent loses focus. Fill them with too little and the agent lacks the knowledge it needs. The traditional fix — stuffing chat history and vector hits into every prompt — burns tokens and still fails to compound learning.

LocusGraph changes this. Long-term knowledge moves out of the prompt and into a structured knowledge graph. The agent retrieves compact, validated knowledge only when it needs it.

Retrieve Only What Matters

The first rule: do not dump the entire graph into the prompt. Use specific queries and filters to pull only the knowledge relevant to the current task.

{

"tool": "retrieve_memories",

"arguments": {

"query": "payment validation edge cases",

"context_types": { "error": ["payment_validation"] },

"limit": 5

}

}Five highly relevant facts beat fifty loosely related ones.

The Graduation Chain

LocusGraph's admission pipeline naturally compresses experience through graduation:

event -> pattern -> skill- Event — a single observation, action, decision, or fact gets recorded.

- Pattern — repeated events get reinforced, boosting confidence.

- Skill — graduated knowledge that the agent can retrieve as a single, dense node.

Each step is denser and more actionable than the last. When your agent retrieves a skill: context, it gets the distilled lesson without needing the full history of mistakes that led there. That is how token usage stays small even as the agent gets wiser.

Design your agent to graduate knowledge. When it solves the same problem three times, store a skill event that summarizes the solution. Link it to the original events with extends.

Summarization

For long-running agents, summarize periodically. Store a summary event that captures the key points from a session or project phase, then link it to the originals with extends.

{

"tool": "store_event",

"arguments": {

"context_id": "session:2025_03_19_summary",

"event_kind": "observation",

"source": "agent",

"payload": {

"topic": "session summary",

"value": "Fixed 3 payment bugs. Root cause was missing null checks in middleware. Added validation layer."

},

"extends": ["session:2025_03_19"]

}

}Future retrievals pull the summary instead of replaying the entire session.

Pruning Stale Knowledge

You do not need to manually delete old knowledge. LocusGraph handles this through confidence scoring:

- Contradicted loci lose confidence and drop in retrieval ranking.

- Unreinforced loci stay at baseline confidence and get outranked by reinforced knowledge.

- Reinforced loci rise to the top naturally and graduate into skills.

The graph acts as a living filter. Validated knowledge surfaces. Stale or incorrect knowledge fades.

Token Cost Heuristics

| Strategy | Typical token impact |

|---|---|

| Tight scoping + low limit | ~5x smaller than dumping chat history |

Skill-level retrieval (graduated skill: contexts) | ~10x smaller than raw event replay |

Summarized sessions linked with extends | Replaces hundreds of session messages with one node |

| Default vector RAG without filters | Largest — competes hardest with model output budget |

Guidelines

| Strategy | When to Use |

|---|---|

| Tight scoping + low limit | Focused tasks with clear context needs |

| Broad query + moderate limit | Exploration and discovery phases |

| Summarization | End of sessions or project milestones |

| Graduation (event to pattern to skill) | Recurring problems the agent should internalize |