8. Tokens in Current IDEs vs LocusGraph

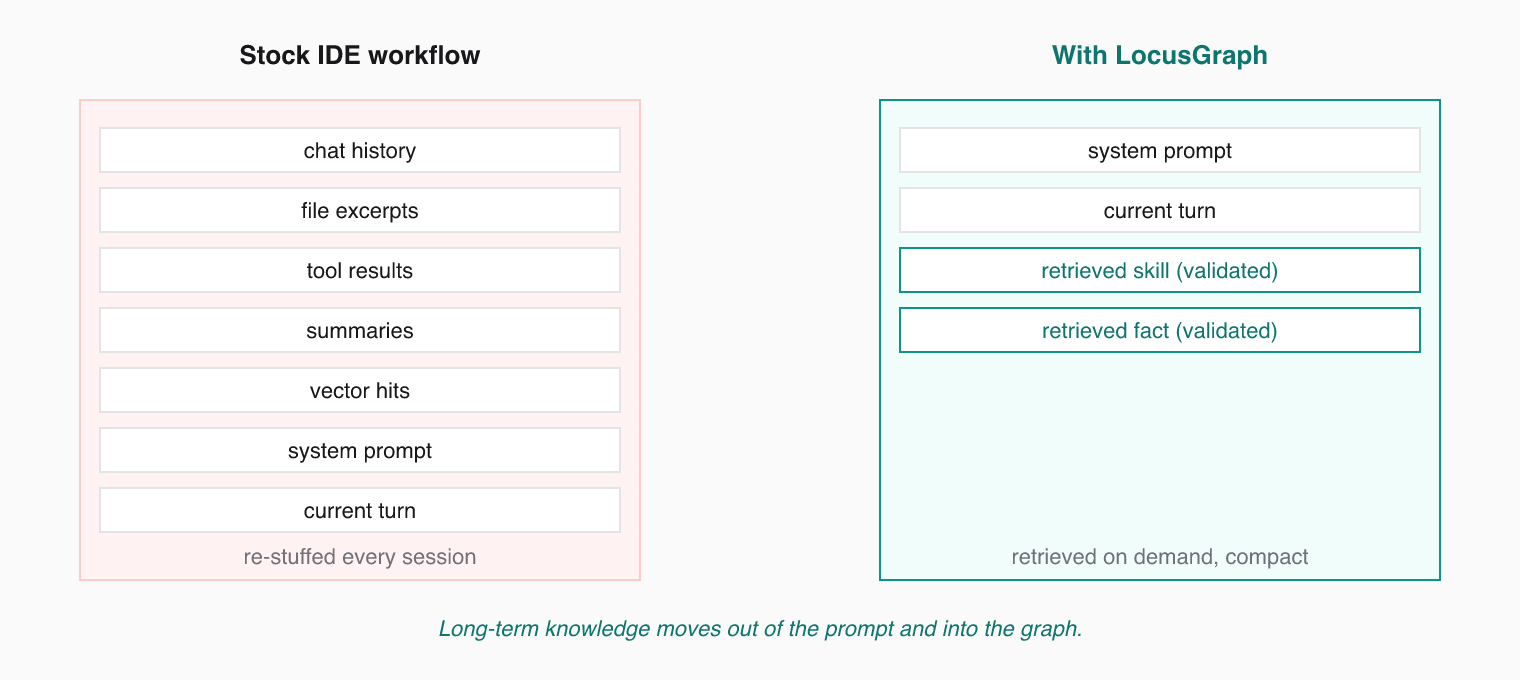

In current AI IDE workflows, tokens are spent stuffing the prompt with chat history, file excerpts, tool results, summaries, and vector-retrieved snippets. It works for the current turn but it is expensive and temporary. The same context has to be reintroduced again later.

With LocusGraph, long-term learning moves out of the prompt and into a structured knowledge graph. The agent retrieves compact, validated knowledge only when it needs it.

What Eats Tokens Today

Most AI IDE setups, by default, send the model on every turn:

- chat history

- file excerpts

- tool results

- summaries of past sessions

- vector-store hits

- system prompt

- the user's current message

That stack works for the current turn but it is rented context. It has to be reintroduced again later. Every new session pays the same token cost to rebuild the same picture.

What LocusGraph Sends Instead

When LocusGraph is the knowledge layer, the prompt for a turn is:

- system prompt

- the user's current message

- a small number of validated, retrieved knowledge nodes scoped to the current task

That is it. The agent does not need to carry its history into the prompt because its history is already shaped into knowledge it can query.

Why This Compounds

| Strategy | Typical token impact |

|---|---|

| Tight scoping + low limit | ~5x smaller than dumping chat history |

Skill-level retrieval (graduated skill: contexts) | ~10x smaller than raw event replay |

Summarized sessions linked with extends | Replaces hundreds of session messages with one node |

| Default vector RAG without filters | Largest — competes hardest with output budget |

As the graph matures and skills graduate, retrievals get smaller, not bigger. A pattern that took 30 events to reinforce can be retrieved as a single skill node when the agent needs it.

The Token Math

For a long-running coding agent across many sessions, the difference shows up as:

- fewer tokens per turn (retrieved knowledge is dense)

- fewer tokens per session (no need to rebuild context)

- fewer tokens per month (skills graduate, replays shrink)

- and importantly: less drift in agent quality, because the model gets the same validated inputs every time.

The best way to lower an agent's token bill is not to compress prompts harder. It is to stop sending the same things over and over by moving long-term knowledge out of the prompt.