4. LLM Agnostic

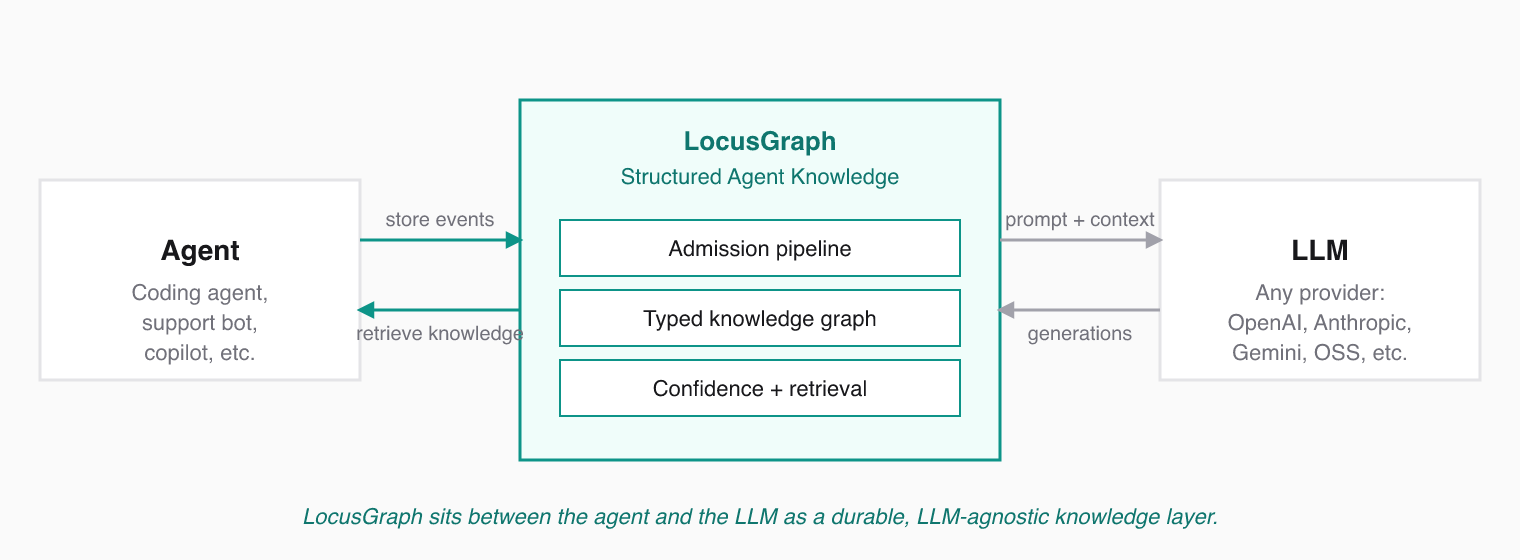

LocusGraph is LLM agnostic because the knowledge layer lives outside the model. The same Structured Agent Knowledge can be used with different LLMs, IDEs, agents, and orchestration frameworks without locking your knowledge to one provider.

What Stays the Same When You Swap Models

The model can change. The agent framework can change. The IDE can change. The knowledge graph stays the durable layer that carries forward what your agent has learned.

| Layer | Often changes | LocusGraph behavior |

|---|---|---|

| LLM provider | Yes | Unaffected — knowledge does not live in the model. |

| Agent framework | Yes | Unaffected — graph is reachable from any framework. |

| IDE / client | Yes | Unaffected — same graph reachable over MCP. |

| Vector index | Yes | Unaffected — semantic retrieval lives inside LocusGraph. |

| Structured Agent Knowledge | No | Persists across all of the above. |

How That's Achieved

LocusGraph exposes its operations through:

- A typed HTTP API that any backend can hit.

- SDKs in TypeScript, Python, and Rust for direct application use.

- An MCP endpoint for any compatible client (Claude Code, Cursor, Amp, ChatGPT, custom).

- Integrations like LangChain that adapt the graph to popular agent frameworks.

The same graph is reachable from all of them. A skill stored from your TypeScript backend is retrievable from Claude Code in the same project, because they both bind to the same graph_id.

LLM agnostic also means provider-cost agnostic. As pricing or capability shifts across models, you can switch without retraining or re-ingesting your accumulated agent knowledge.

Why This Compounds

Agent setups change every few months. Models get faster, cheaper, or more capable. Frameworks rise and fall. The teams that move fastest are the ones whose knowledge layer is not locked to any of those moving parts. LocusGraph is designed to be that durable substrate.